The past few months have seen the tech industry bickering over the potential of large language models — tools that generate output in a human-like manner. And while the gains like full-on automation are still a far cry from utilitarian cases, some large language model benefits have already found application in the business landscape.

So, let’s dive deep into what makes large language models a unique commodity for market leaders and what benefits of LLM will help your company solve its unique challenges.

What are large language models and the meaning behind LLMs?

The boom of generative AI and LLMs has been sparked by the remarkable success of OpenAI’s text-generating bot, ChatGPT. But although the notion of LLMs is often associated with ChatGPT, there is a wealth of other models that share similar characteristics.

Essentially, a large language model is a type of AI system trained on massive amounts of data. Some LLMs employ NLP to enhance text analysis, while machine learning algorithms help them tackle specific business tasks.

The core difference that sets LLMs apart from their predecessors is context. For example, GPT-4 can vacuum up to 1500 words of context, thus getting a better handle on your request. Also, the latest and greatest language modeling tools are multimodal. This makes them apt for dealing with different formats of inputs, including text, video, and image.

Two types of language modeling solutions

When planning to integrate a language model, businesses have two options. The first option is to access the model as is and have all data stored and processed on the infrastructure of a model provider. In this case, you can use the solution on a SaaS basis.

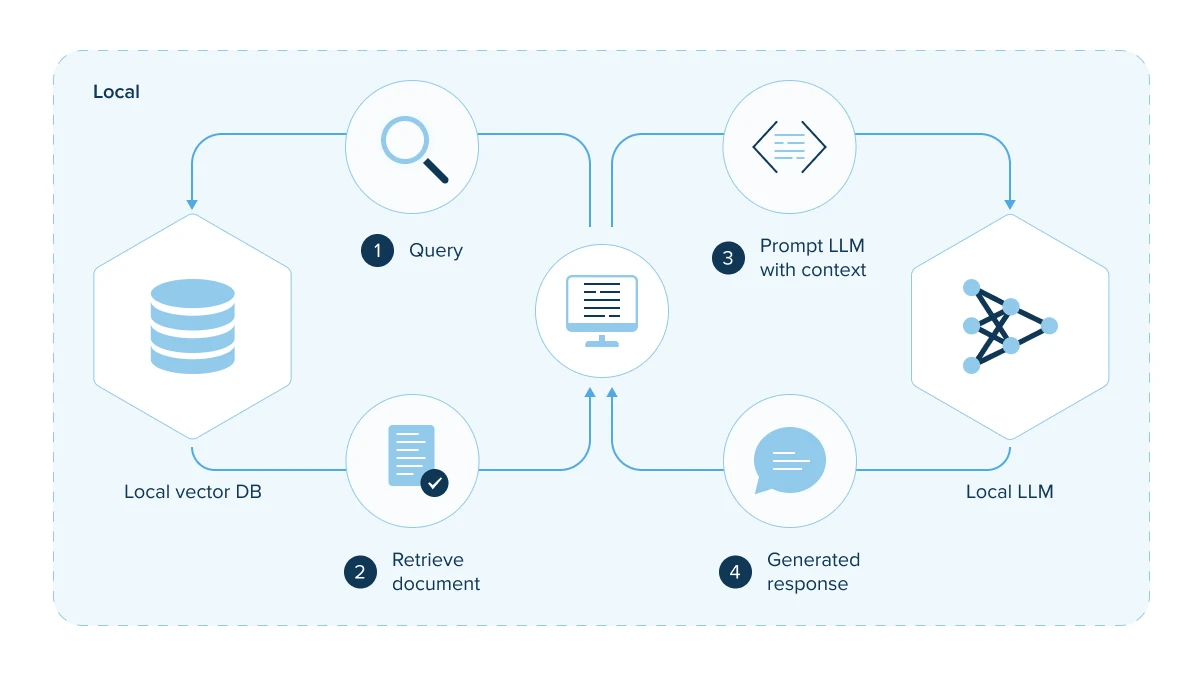

Local Deployment of LLMs

Local Deployment of LLMs

The second option is to deploy the API of the model on-premise or on a private cloud. In this case, the company not only has complete control over the API’s configuration and management but can also unlock a bunch of other, game-changing benefits.

Top five benefits of an on-premise large language model

Language models and gen AI in general are billed as the next productivity frontier for global businesses. According to McKinsey, generative AI could add the equivalent of $2.6 trillion to $4.4 trillion annually across the 63 use cases, having a sizable impact across all sectors.

Deployed on a company’s infrastructure, LLMs become an even greater source of value as they address the downsides of public models.

Strong security

Solid security and adherence to internal data practices are the first and foremost benefits of doing LLM on-premise. With on-prem LLM models, you can keep a tight rein over your data, making sure it meets existing security protocols and procedures in place. Moreover, by maintaining strict network isolation, you can ensure that your LLM stays immune to external threats.

As data processing occurs within your own infrastructure, no sensitive information can slip through the crack. This is of crucial importance for compliance-heavy industries such as healthcare or finance. Opting for on-premise deployment also allows you to manage access controls and get rid of external dependencies on third-party providers.

Custom functionality

When installing models like GPT-4, LLaMa, and the like locally, your developers can adjust them to match your specific needs, requirements, and business tasks. Training a custom model allows you to transform a powerful, yet general-purpose solution into a business-specific tool.

To tap into this benefit, you need a team of machine learning consultants and developers to fine-tune the model and train it on your proprietary data. This will allow the models to support specific tasks all the way across your company. As a result, you increase the efficiency of AI tools in a variety of tasks – from personalized content creation to customer support and term extraction.

Reduced latency

Running a large language model locally or on a private cloud can offer significant LLM benefits in terms of latency. Such deployment strategy ushers in reduced latency as the response time between making a request and receiving a response decreases. This advantage of LLM is a game-changer for applications that rely heavily on real-time response such as chatbots.

Cost reduction

Cost efficiency and reduction of labor costs are among other LLM benefits. Provided you have the necessary hardware, having a model operate on-premise can be cheaper than paying cloud costs. You will also be safe from vendor lock-in and third-party services being in control of pricing.

As for indirect cost savings, locally deployed and fine-tuned LLMs can be trained to automate a variety of business tasks in order to reduce labor costs. Thus, tools like ChatGPT can significantly reduce the workload of customer service teams, reverse admin creep, and streamline the majority of manual tasks.

Revenue generation

The ability to generate higher revenues is also among the core benefits of an LLM. According to McKinsey, potential productivity lifts predicated on generative AI across functional areas and industries can reach up to $600 billion. The exact impact will depend on the functional area and the industry.

One key area where benefits from LLM have manifested themselves is marketing and advertising. With the ability to analyze customer behavior and preferences, gen AI can create personalized and targeted content that resonates with customers. This can lead to increased engagement, conversions, and ultimately, sales.

Consume or customize: how to maximize LLM advantages

Easy-to-access foundational models like ChatGPT and DALL-E are rapidly democratizing technology in business. But while the majority of companies are making a foray into the technology by operating off-the-shelf models, forward-looking businesses are customizing foundational models to capitalize on them. Both approaches are viable, yet one of them helps you make the most out of LLM benefits.

Consume

This adoption strategy presupposes API-based implementation of LLM applications. Companies access the ready-made model via APIs and customize it to a small degree. The go-to techniques for this adoption method include prompt engineering techniques such as prompt tuning and prefix learning.

Although this approach makes an LLM model more suitable for your business tasks, you still cannot fully leverage the advantages of LLM or make it a secure co-pilot for your teams.

Customize

When customizing a model, companies need to fine-tune them with their own data. If it’s on-prem or private-cloud deployment, the model can be trained on proprietary data.

Customization will enable the model to augment complex workflows and business processes. In this case, the odds of maximizing the benefits of an LLM are higher compared to the adoption method mentioned above. Depending on the training data, a customized model can perform sentiment analysis, automate content creation, facilitate document management, and more.

How to prepare for LLM adoption step-by-step

Any AI consulting and development project requires in-depth planning and strategizing. Instead of jumping straight into the hype, decision-makers should first step back and estimate the impact and ROI of generative AI projects.

Develop an adoption strategy

First and foremost, you need a comprehensive strategy that outlines enablers of a successful gen AI adoption. These include technologies, competencies, governance frameworks, organizational shifts, and other levers.

A generative AI strategy should follow the same principles that guide an AI-powered organization so make sure to democratize access to enterprise data and mull over the necessary process transformations.

Source: Unsplash

Assemble a cross-disciplinary team

It’s hardly possible to reap the benefits of getting an LLM without the right domain experience and technical proficiency. Therefore, you should bring together a cross-disciplinary team that will help you identify possibilities for LLM adoption and implement deployments. Securing a team with industry knowledge will allow you to mitigate business risks and meet industry requirements.

Identify business use cases

Not all processes are ripe for artificial intelligence, whatever form it takes. Each implementation instance should be carefully estimated and assessed against possible risks. Keep in mind that ROI calculation is more challenging in the case of gen AI projects than the conventional ones due to the transformational nature of the technology.

Get a handle on data

The only way you can make an LLM reason about your proprietary data is to include that data in the model’s prompt or fine-tune the model with it. Therefore, easy access, holistic collection, and careful curation of proprietary data are vital to setting your project off to a good start. Some business cases might require third-party data.

Embrace the accelerating value of generative AI

Generative AI and large language models in particular present untapped opportunities for global businesses. But in order to seize them, it’s not enough to consume the model as is; fine-tuning it with your own proprietary data is what unlocks the hidden value. Stronger security, ample features, and revenue generation are just a few of large language model benefits.

Kick-start your LLM project

InData Labs is an AI-focused company with almost a decade of experience in delivering custom NLP and ML solutions to global organizations. Contact us, and we’ll help you lay hold of multiple benefits custom LLMs deliver.