Image processing is a salient part of automated digital analysis. In particular, image processing techniques in computer vision help machines gain human-like insights from digital input.

The visual stimuli can take many forms, including video frames, camera views, or multidimensional data. Therefore, it is quite challenging to manipulate digital input that comes in diverse formats and shapes.

In this post, we will discuss the nuts and bolts of digital image processing and machine vision. You’ll also examine one of the most widely used visual transformation techniques. We’ll also see how they amplify and ease accurate visual analysis.

Digital image processing vs computer vision: what’s the difference?

In the past decade, the world has seen tremendous advances in computer vision and image processing techniques.

The aim of machine vision is to discern valuable insights from the data and apply it to specific tasks. This technology pulls output from digital images and videos. It then uses this data to identify objects or scenes for performing various tasks. The latter may include surveillance, manufacturing quality control, human-computer interaction, and more. Thus, computer vision applications examples include autopilot functionality, fraud management systems, medical imaging, and others.

Computer vision aims to detect and interpret visuals in the same way as humans do. It aims at distinguishing, classifying, and sorting visual data based on important characteristics such as size, color, and so on. Also, its overriding goal is to mimic the intricacy of the human visual system while also offering computers the ability to comprehend the digital world.

Image processing is a procedure to manipulate images for various tasks. The latter may include image enhancement, feature extraction and others. Computer algorithms have paved the way for advanced image processing. Yet, both input and output are images, regardless of any intelligent inference being done over the input itself. Thus, visual processing is geared towards transforming the image such as smoothing, contrasting, and others.

As we can see, the main difference between computer vision and image processing is that machine vision systems glean valuable insights for picture analysis and recognition software. Visual processing, on the other hand, doesn’t include any analysis.

Computer vision and image processing: powerful match

Now that we’ve grappled with the differences, let’s see how these two join forces and complement each other. Intelligent algorithms have opened a new era in real-time applications like autonomous driving cars, object tracking, and defect detection. So how do the two overlap?

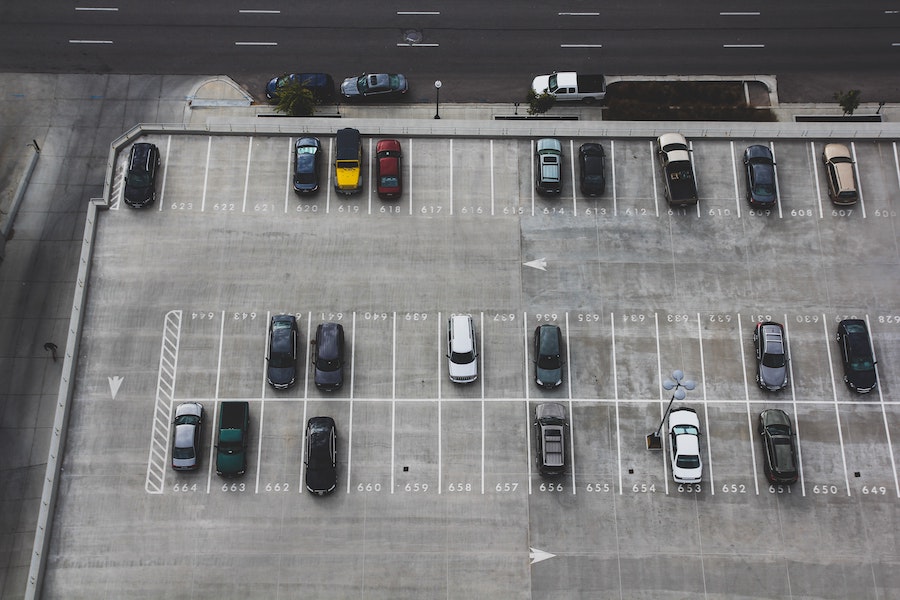

Image processing is a subset of machine vision, thus being one of the robust analysis methods. This is how computer vision works. It includes a great number of components such as cameras, lighting devices, and digital processing techniques.

Source: Unsplash

Thereby, processing software is a part of the whole setup that assists the solution in preparing the image for further analysis. For example, image editing and restoration help remove visible damage from digital copies for more accurate image interpretation. Both computer vision and image processing rely on machine learning algorithms.

Where is digital processing used?

Image processing is a popular technique in image enhancement and analysis. It is also frequently applied to manipulate the inputs for the purpose of detection, classification or identification as well as measuring and mapping features.

This technology is a perfect fit for surveillance systems, healthcare hardware (like MRI), satellite imaging, weather forecasting and many other fields. For example, famous robot vision is a product of artificial intelligence, and, inter alia, visual interpretation. Algorithms for digital image processing and computer vision augment and interpret visual input of the surroundings.

Source: Unsplash

In the medical continuum, visual interpretation underpins medical imaging. With the growing amount of medical data, automation has become inevitable for radiologists. Thus, processing algorithms help uncover many types of anomalies at the early stage. Automated analysis, for example, fosters early detection of cancerous cells by analyzing images from patients. Nowadays, computer algorithms can help radiologists interpret breast MRI scans with more accuracy.

In pattern recognition, image processing holds doubtless potential for aiding computer-assisted diagnosis, handwriting recognition and images recognition. Optical character recognition is a vibrant example of this application. Here, image processing techniques analyze the patterns of light and dark letters and numbers to turn the scanned image into text.

There’s one thing that holds true for most use cases. Image processing often goes hand in hand with computer vision. Together, they form a robust analysis facility. With that said, let’s go over image processing techniques in computer vision. Here’s how they assist machines in processing raw input images and preparing them for specific tasks.

Anisotropic diffusion

Improving image quality is an essential part of most computer vision implementations. Systems can capture images under different lighting conditions, various angles, and DPI. Therefore, enhancement techniques are a must for accurate interpretation.

Noise reduction, in particular, helps get rid of Gaussian Noise as well as Salt and Pepper Noise. The two arise from different lighting conditions and sparse light and dark disturbances. Anisotropic diffusion applies a filtering approach to reduce image noise. In doing so, it preserves important parts of the image content necessary for the image interpretation.

Source: Unsplash

There are many examples of anisotropic diffusion used in machine vision applications. These may include image denoising, super-resolution, and stereo reconstruction. For instance, in magnetic resonance images, this technique eliminates high-frequency features, maintaining the image edges.

Image restoration

Corrupted input is a common problem that arises when visuals are captured by sensor devices with low quality. They may be characterized by blurriness, lack of details or noise that makes them useless for most purposes. The recent leaps in deep neural networks have led to an improvement in the state-of-the-art results for this challenge.

Most of these image restoration techniques aim at improving the visual quality of degraded images by restoring their details. Image restoration is the process of replacing corrupted image parts with realistic fragments. In this case, the algorithm relies on an existing undamaged part of the image to replace the corrupted one.

Overall, digital image restoration involves taking an existing image and either removing noise or adding information that was previously not captured by the sensor. In computer vision, this technique reverses the process that blurred the image. As a result, the system obtains a high quality image from a corrupted input image.

Hidden Markov models

Hidden Markov models or HMMs is a well-known technique for computer vision and image processing. HMMs use statistical properties of signals and focus on their spatial characteristics, which makes them perfect for recognition tasks.

Technically-wise, the recognition task falls into two subtasks. At the training stage, machine learning engineers build a model based on a set of different images that feature a particular person’s face. At the recognition stage, they assign sample images to one of the models with some probability. Although this technique has originally risen from speech recognition, it’s now widely used for computer vision solutions.

Source: Unsplash

Neural networks

Computer vision owes its skyrocketing growth to convolutional neural networks or CNNs. Therefore, this technique is one of the pillars for interpretation tasks. As such, neural networks attempt to replicate the human brain using mathematical models to create machines with artificial intelligence.

The secret of their success is directly linked with the availability of large training datasets. For example, the Imagenet Large Scale Visual Recognition Challenge with over 1 million images is used to evaluate modern neural networks. However, adopting this technique for image processing calls for in depth expertise with focus on data preparation and network optimization.

For visual analysis, CNNs learn by simulating the human learning process. They examine the input for patterns, and integrate those patterns to develop logical rules for processing data or identifying objects. Thus, if you feed two datasets with and without apples, the system will be able to identify pictures of apples without being told what an apple is. In a way, it’s similar to teaching your child the basics of the surroundings.

Other types of neural networks used in computer vision include U-Nets, residual networks, YOLO, etc. Traditionally, those types are classified based on structure, data flow, density, layers and depth activation filters. Each of them has a unique approach to object detection and is best fit for specific tasks. YOLO, for example, treats object detection as a regression problem and processes the whole image at once.

Linear filtering

Any type of data filtering is very similar to colored glasses. The result depends on the plate color you’re looking through. However, it allows for a much greater variety of effects than experimenting with different plates.

As such, filtering refers to an operation which results in an image of the same size obtained from the original image according to certain rules. The rules which set the filtering can be very diverse.

Source: Unsplash

Linear filtering is a family of filters with a very simple mathematical description. At the same time they allow you to achieve a wide variety of effects. For vision tasks, it is used to modify or enhance the input.

The main differentiator of this model is that the operation is performed on each pixel, thus producing similar results for all images. Most advanced techniques stem from linear filtering and its concepts.

Beside enhancing, you can use linear filtering for generic tasks like contrast improvement, denoising, and sharpening. This technique can also highlight or eliminate certain features. Restoration, reconstruction and segmentation go into its application area as well.

Independent сomponent analysis

Independent component analysis or ICA is a computing method based on dividing a multidimensional signal into isolated subcomponents (components), which are independent of each other. It is a widely used unsupervised machine learning method that can be applied to extract the independent sources from multivariate data.

In traditional methods, the components are treated as fixed and unknown. In ICA, these components are treated as random variables whose distributions depend on the observed data. In other words, we use maximum likelihood estimation for finding latent factors.

In simple words, you can apply this algorithm to transform the original image and extract thematic data for further classification. In even more layman’s terms, ICA can help you transform blur visuals into high-quality images.

Since ICA can uncover the hidden aspects in multivariate signals, this technique has revolutionized image recognition and artificial intelligence applications. By uncovering non-Gaussian factors in a dataset, you can produce an effective result, be it visuals or voice signals.

Resizing

Now let’s examine more simple techniques that yet make a huge difference. Before using computer vision for identifying tasks, processing resizes the image to a specific resolution. The spatial resolution for such tasks is quite small which facilitates training and inference.

Also, the effect of processing resize images is visible in the accuracy of tasks being trained for. Traditionally, most frameworks get away with ready-made image resizers. Yet, off-the-shelf resizers can limit the task performance of the trained networks. Therefore, engineers may opt for learned resizers to boost performance.

Other basic transformations may include cropping, flipping, and rotating. All of them help prepare the visual for further use and analysis by machine learning algorithms and machine vision setups.

The final word

Computer vision has come a long way in recent years. Yet, there are still many challenges that must be overcome before computers can truly understand visuals like humans do.

Yet, machine vision is a complex problem due to the number of steps required to extract information from an input. These include camera calibration, feature extraction, image segmentation, recognition systems and others.

Digital visual processing is a critical step in the pre-analysis stages of computer vision. It enhances the image for later use and ensures the machine learning algorithms yield accurate results.

Image processing techniques for your business to take off

For more computer vision topics or other tech industry-related materials, check out our blog.